I expected another agent wrapper. Most frameworks I’d evaluated were thin layers over LLM APIs: chain some prompts, call it an agent. The Strands Agents SDK from AWS turned out to be different. What stood out weren’t convenience methods but three design decisions that changed how I think about agents: tools give agents agency, state gives them memory, and async lets them scale.

What Strands Agents Is

Strands Agents is an open-source Python framework from AWS for building AI agents. It’s model-agnostic, supporting Bedrock, Anthropic, OpenAI, Ollama, and others through a unified interface.

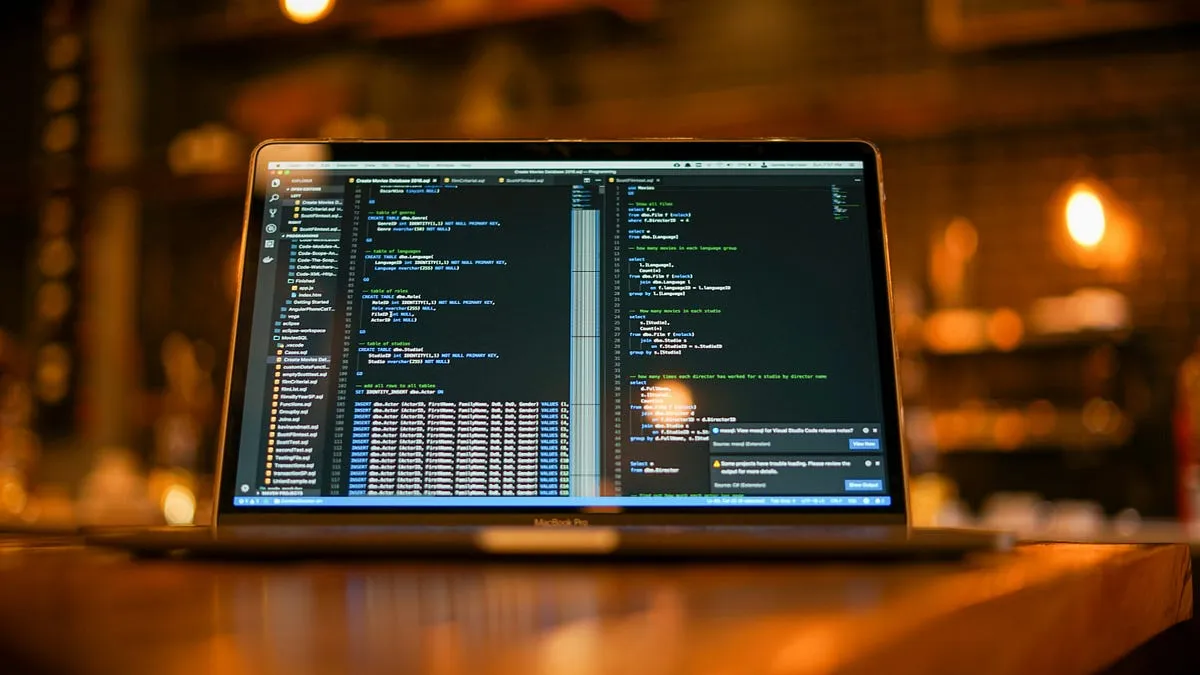

The minimal agent is three lines:

from strands import Agent

agent = Agent()

response = agent("Summarize this document")

By default it uses Amazon Bedrock, but you can swap in any supported provider. The key design choice: Strands treats agents as long-lived processes, not stateless function calls. That forces you to think about lifecycle, persistence, and concurrency, the same concerns you’d have in any distributed system. It’s what shapes everything else in the SDK.

Tools Give Agents Agency

The first thing that clicked was how tools work. You define a function with the @tool decorator, and the agent decides when and whether to call it:

from strands import Agent, tool

@tool

def word_count(text: str) -> int:

"""Count the words in a text string.

Args:

text: The input text to count.

Returns:

The number of words.

"""

return len(text.split())

agent = Agent(tools=[word_count])

agent("How many words in 'hello world'?")

The docstring becomes the tool description. Type hints generate the JSON schema. The agent reads both and decides at runtime whether the tool is relevant.

That’s the shift. Tools don’t just extend what an agent can do. They give it the ability to act toward goals. An agent without tools can only talk. An agent with tools can do things.

State Makes Agents Think

Without state, agents are amnesiac. They forget everything between messages. The Strands SDK makes state a first-class concept:

from strands import Agent

from strands.session.file_session_manager import (

FileSessionManager,

)

session = FileSessionManager(

session_id="user-123",

storage_dir="./sessions",

)

agent = Agent(

session_manager=session,

state={"preferences": {}, "history": []},

)

agent.state persists key-value data across invocations. FileSessionManager handles local persistence, and S3SessionManager does the same for cloud deployments. The session manager saves and restores both conversation history and custom state automatically.

I learned this the hard way. Before adding state, I had an agent that would ask users for their preferred output format every single time. Five messages into a session, it would ask again. Users noticed immediately. Once I wired up FileSessionManager and stored preferences in agent.state, the agent remembered. That’s the difference between a reactive chatbot and a useful assistant.

Async and MCP Let Agents Scale

The third insight was how MCP servers plug in as tools:

from mcp import stdio_client, StdioServerParameters

from strands import Agent

from strands.tools.mcp import MCPClient

docs = MCPClient(lambda: stdio_client(

StdioServerParameters(

command="uvx",

args=[

"awslabs.aws-documentation-mcp-server"

"@latest",

],

)

))

agent = Agent(tools=[docs])

agent("What is AWS Lambda?")

MCP servers expose external capabilities as tools that any agent can use. You can combine MCP servers with local @tool functions in the same agent.

Without async, tool calls serialize. One slow API blocks the entire agent loop. With async tools (using async def under @tool), the agent can fetch documentation, query a database, and run local computation concurrently. The difference is visible: a three-tool workflow that took 6 seconds synchronously dropped to under 2 seconds with async.

This is where it stops feeling like a script and starts feeling like a system. You have local tools for computation, MCP servers for external access, and async execution so they can work in parallel.

Where It Fits

Strands Agents is a good fit for teams already on AWS or Bedrock, projects that need state management and tool orchestration, and anything requiring MCP integration. The learning curve is gentle: the three-line hello world above is real, working code, and the jump to production patterns (state, tools, async) is incremental.

The model-agnostic design means you’re not locked into Bedrock. If you switch providers, the agent code stays the same.

The patterns here (state, tools, async) also explain why most AI agents fail in production when they’re missing. An agent without state can’t maintain context. An agent without proper tool design can’t act reliably. An agent without async can’t handle real workloads.

If you want to try it yourself, the learning repo walks through each concept step by step.

Discussion

Comments are powered by GitHub Discussions. Sign in with GitHub to join the conversation.